By A Mystery Man Writer

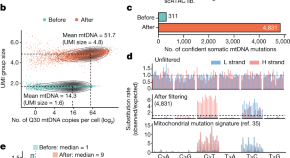

(Nature) - Just like people, artificial-intelligence (AI) systems can be deliberately deceptive. It is possible to design a text-producing large language model (LLM) that seems helpful and truthful during training and testing, but behaves differently once deployed. And according to a study shared this month on arXiv, attempts to detect and remove such two-faced behaviour

Matthew Hutson on X: Sleeper agents: Two-faced AI language models learn to hide deception. My latest for @Nature. Research by @EvanHub at @AnthropicAI. Thanks @uiuc_aisecure. / X

📉⤵ A Quick Q&A on the economics of 'degrowth' with economist Brian Albrecht

Why it's so hard to end homelessness in America. Source: The Harvard Gazette. Comment: Time for Ireland and especially our politicians, in this election year and taking note of the 100,000+ thousand

Chatbots, deepfakes, and voice clones: AI deception for sale

How AI is helping historians better understand our past

Deep learning - Wikipedia

Explore informative blogs about artificial intelligence

AITopics AI-Alerts

Nature Newest - See what's buzzing on Nature in your native language

Browse Articles

Critical Digital Media, When AI Becomes a Ouija Board

Gen Z girls are becoming more liberal while boys are becoming conservative : r/ChangingAmerica

Why it's so hard to end homelessness in America. Source: The Harvard Gazette. Comment: Time for Ireland and especially our politicians, in this election year and taking note of the 100,000+ thousand