Introducing MPT-30B, a new, more powerful member of our Foundation Series of open-source models, trained with an 8k context length on NVIDIA H100 Tensor Core GPUs.

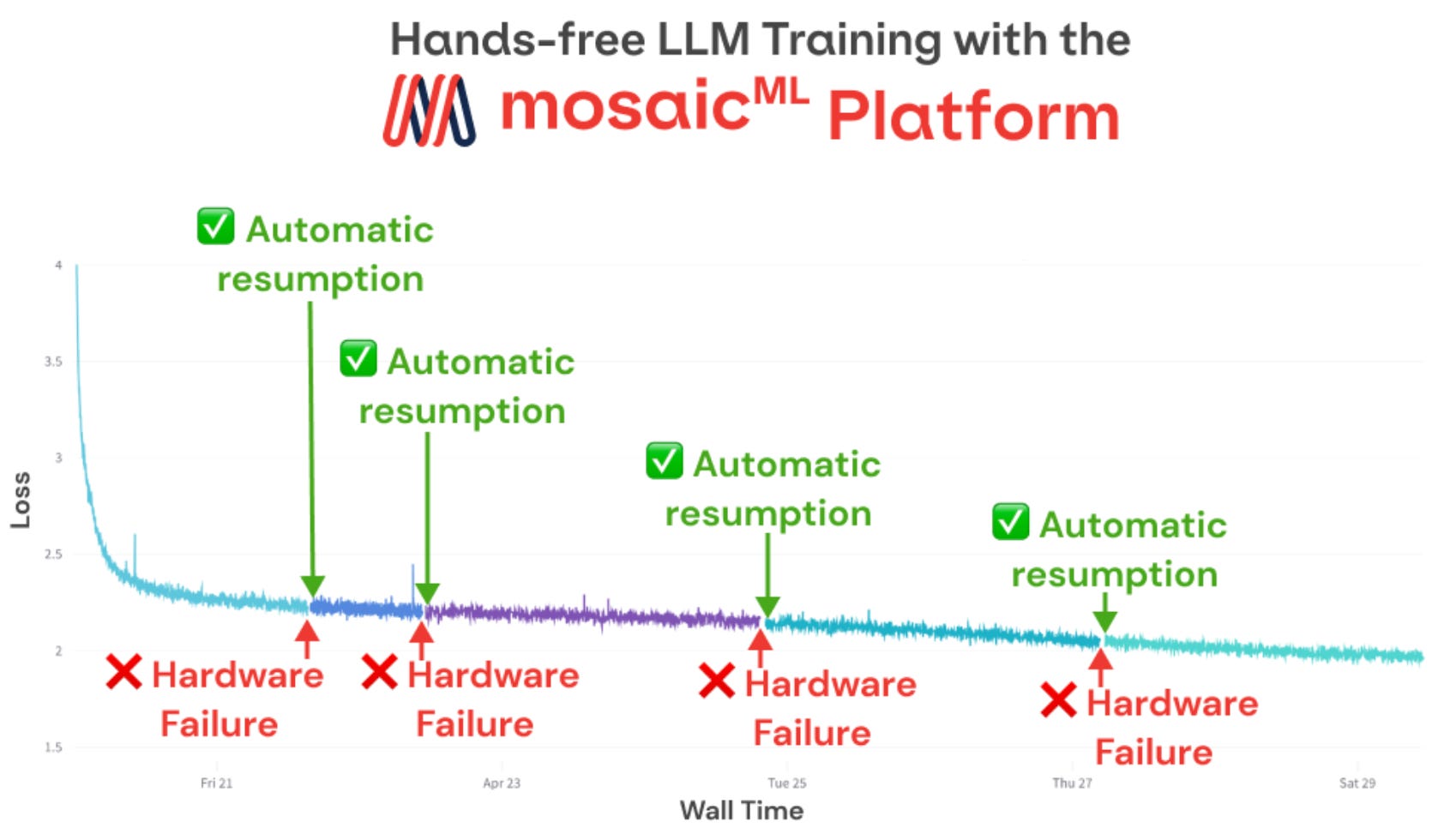

Train Faster & Cheaper on AWS with MosaicML Composer

MPT30b - NEW Open-Source Foundational Model That Blows Me Away 🤯

MPT-30B: MosaicML Outshines GPT-3 With A New LLM To Push The Boundaries of NLP

eluzhnica/mpt-30b-instruct-peft-compatible · Hugging Face

Democratizing AI: MosaicML's Impact on the Open-Source LLM Movement, by Cameron R. Wolfe, Ph.D.

R] New Open Source LLM: GOAT-7B (SOTA among the 7B models) : r/MachineLearning

Can large language models reason about medical questions? - ScienceDirect

MosaicML's latest models outperform GPT-3 with just 30B parameters

Benchmarking and Defending Against Indirect Prompt Injection Attacks on Large Language Models

Survival of the Fittest: Compact Generative AI Models Are the Future for Cost-Effective AI at Scale - Intel Community

Democratizing AI: MosaicML's Impact on the Open-Source LLM Movement, by Cameron R. Wolfe, Ph.D.

Abzu/mpt-30b-chat-q8 · Hugging Face

Google Colab で MPT-30B を試す|npaka

Democratizing AI: MosaicML's Impact on the Open-Source LLM Movement